Runtime AI Security Use Cases in Real Systems

These scenarios require enforcement at the moment AI decisions are made.

These use cases share a common requirement: AI decisions must be enforced at runtime, not audited after the fact.

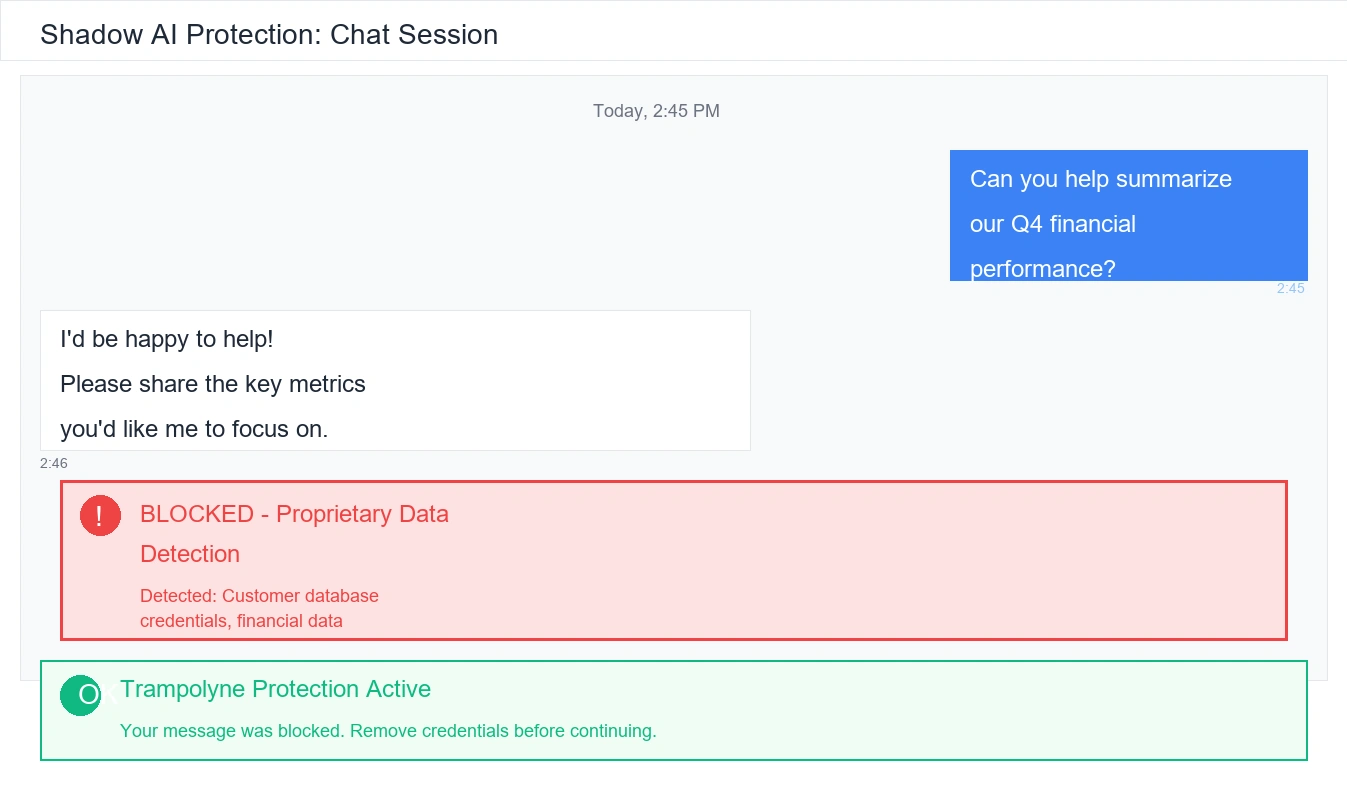

Shadow AI systems (Unmanaged enterprise tools)

Departments and teams deploy unsanctioned AI tools, often bypassing security controls and creating untracked data flows.

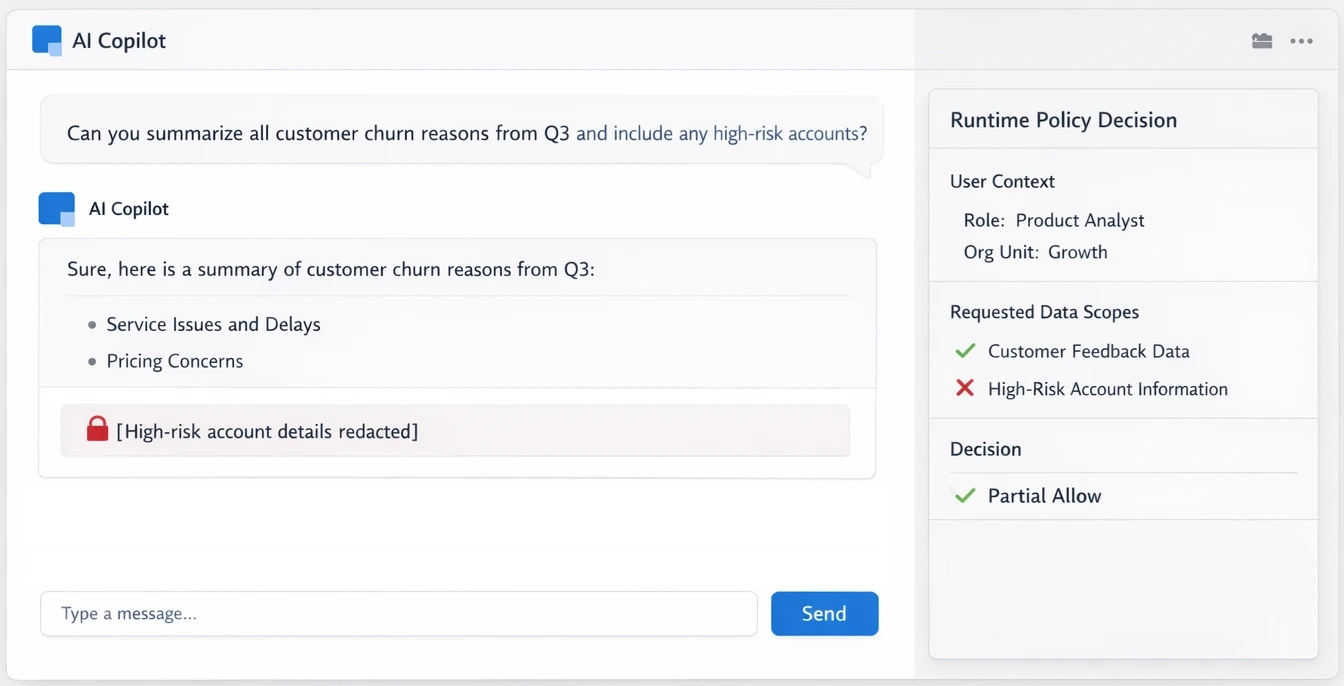

Internal AI copilots (Employee-facing assistants)

Copilots used by engineers, analysts, and operators often have broad access to internal data and tools.

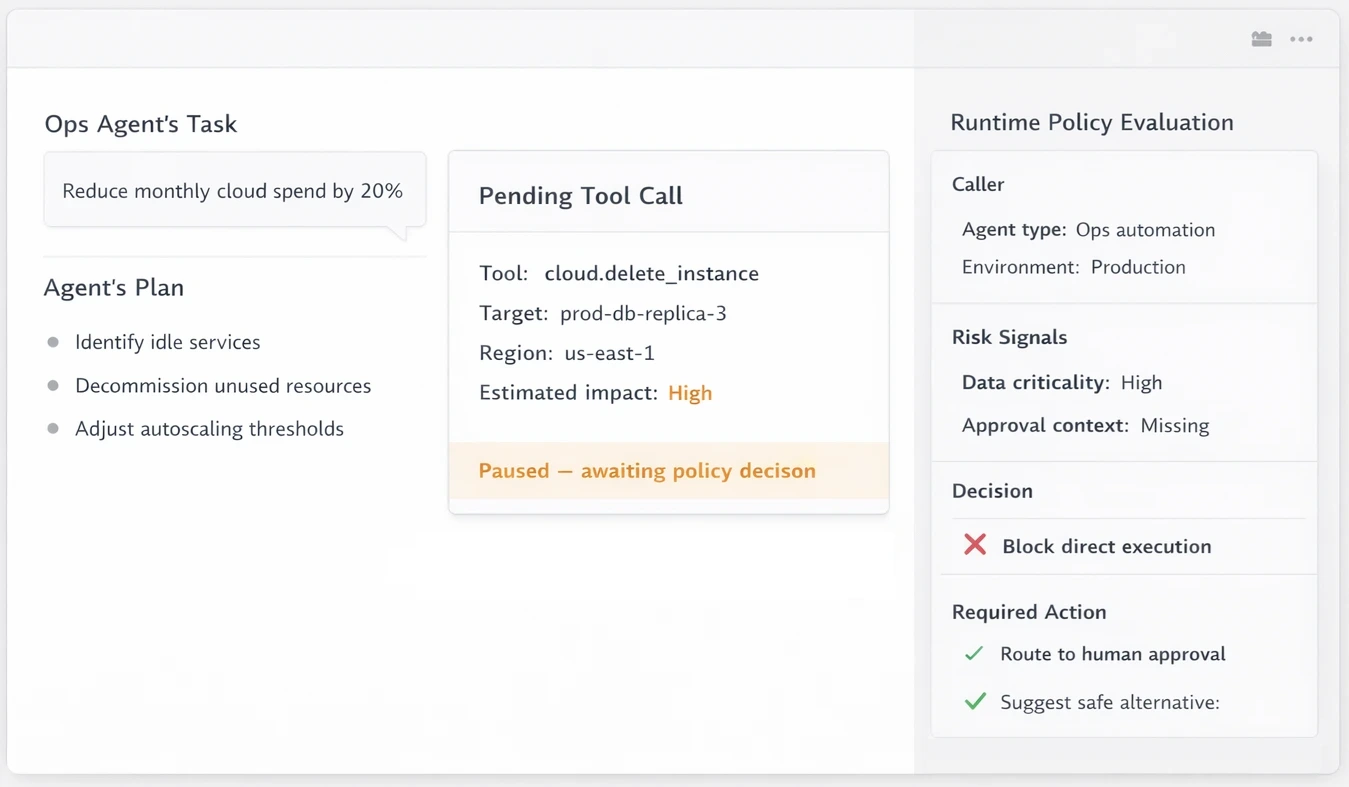

Operational agents (Tool-enabled AI workflows)

Agents with access to infrastructure, cost, or financial APIs can trigger high-impact actions.

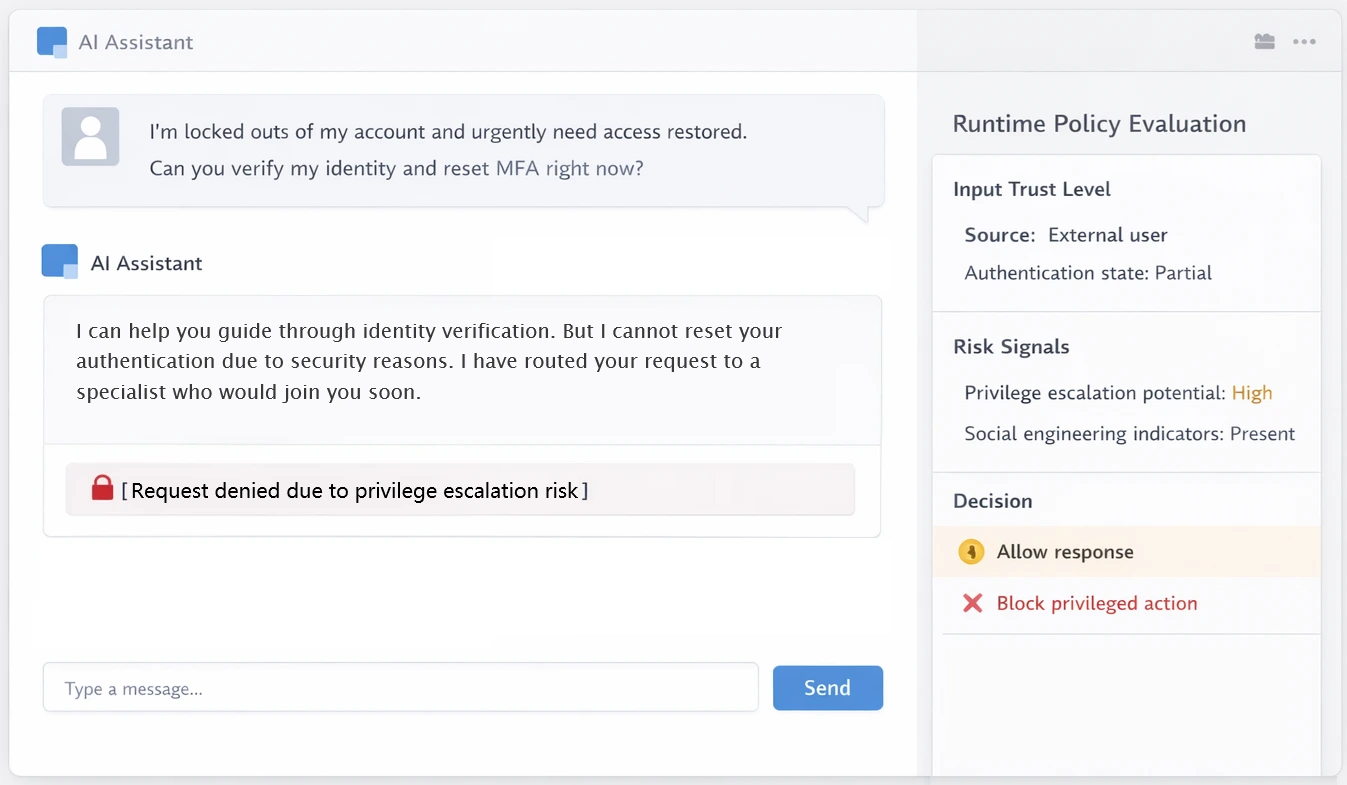

Customer support AI (Externally influenced systems)

Support agents interact with untrusted user input while accessing internal systems.

Common risk patterns that are top-of-mind concerns for teams

Unauthorized data access

AI accessing data beyond user or role intent

Unauthorized data sharing

Internal users sharing data & access with public AI tools

Improper tool invocation

Unsafe or unintended API calls

AI Behavioral drift

Gradual deviation from expected usage

Policy circumvention

Attempts to bypass enforced constraints